It will no doubt stun my children to realize that their, oh, so young looking mother lived at a time when the majority of households lacked a personal computer. I mean, personal computers existed, but they were the size of a microwave oven (or larger) and the cost more than a few car payments. As a result, the most exposure most kids received was in the computer lab at school. Considering the laws of supply and demand, the lack of household market also limited the number of available programs, especially those designed for young people. However, our school system managed to find one that both educated and entertained (it helped it came bundled with the operating system). It was called – Oregon Trail.

It will no doubt stun my children to realize that their, oh, so young looking mother lived at a time when the majority of households lacked a personal computer. I mean, personal computers existed, but they were the size of a microwave oven (or larger) and the cost more than a few car payments. As a result, the most exposure most kids received was in the computer lab at school. Considering the laws of supply and demand, the lack of household market also limited the number of available programs, especially those designed for young people. However, our school system managed to find one that both educated and entertained (it helped it came bundled with the operating system). It was called – Oregon Trail.

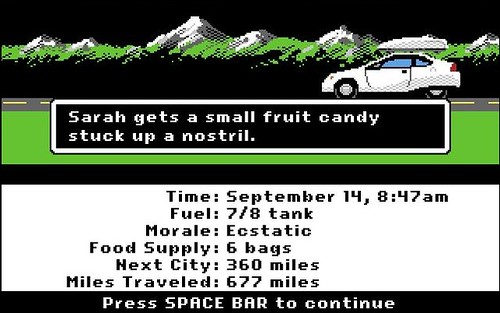

The premise was this – you started out in Missouri in the 1800s as an intrepid settler determined journey to Oregon’s Williamette Valley, a mere 2,170-mile / 3,490 km jaunt to the Western side of the USA, via covered wagon with only your family, a bit of cash, and what you could carry. Along the way you had to deal with challenges such as broken axles, fording streams, and a little thing called death going by the names of typhoid, dysentery, drowning, starvation, and/or snake bite.

It’s good old-fashioned fun for the whole family!

Imagine my delight, then, to find the powers that be, hoping to tap into the current nostalgia trend as evidenced by recent remakes or reunions of movies and shows from my youth, produced the analog remastered Oregon Trail – The Card Game. I couldn’t hand over my money fast enough, buying it for a friend.

We laid out the cards. We attempted to read through the rules. I may have drunk too much wine. Somehow, before we had called it a night, we managed to play two games and my character hadn’t died once. It was a feat I’d rarely managed in the computer version and the game, a fun reminder of how far society, as well as our technology, has come.

My sons, on the other hand, have never known life before computers small enough to carry in your pocket and it has become easier to manage the list of names of families lacking a smartphone than those who have one. As a result, this little device has gone from a luxury item to a tool more necessary for the smooth functioning of my household as well as my community than the mailbox outside our door.

For example, Kiddo’s school required me to download not one but three apps just to handle daily communication. There is Remind – an app used for school-wide memos such as upcoming teacher work-days, class photos, and past due library book notices, Shutterfly Sites – a program containing his class list of contacts and volunteer / school supply sign-ups, and his teacher’s personal favorite, Class Dojo, which allows here to post pictures of their educational day, highlight individual student performance, and save on paper in the form of printed weekly newsletters. As a result, the majority of my phone’s notifications are school-based, not that I’m complaining.

I didn’t think anything of it then, to receive a message from Kiddo’s teacher indicating that an attached letter would be coming home in Kiddo’s school bag. However, opening the attachment, I realized this was not just another noticed about a school fundraiser or upcoming assignment. Oh no, nothing fun like that at all. Lice had been found in Kiddo’s classroom.

The rest of the letter went through the basics – how the very real version of cooties can make their homes on the scalps of adults and children alike regardless of cleanliness or personal care and what to do if you see their nits in your child’s hair. We verified that Kiddo hadn’t been colonized as soon as he came home, then again the next morning, and later the following day. And yet, even though I knew he hadn’t brought uninvited guests home, my scalp started itching just thinking about the words on that page.

Admit it. You are considering scratching your head now too after reading this.

Thus proving that while trends come and go and methods of communication evolve, the words we use to do so, will remain ever powerful – so use yours well.

And always be on the lookout for snakes.

That particular “L” word is forbidden in my home. I dread each and every one of those letters. I traumatized by your post.

LikeLike

I was really hoping we’d seen the last of those letters once he was out of kindergarten, but to the letter’s point, it affects people of all ages. I guess it could have been worse. It could have been the dreaded Nora virus letter. Then again, at least that just means I have the potential to lose some weight before swimsuit season.

LikeLiked by 1 person

You are so right. As soon as you mentioned lice I scratched my head. And I’m bald. There is no place for the lice to live on my completely hairless scalp and yet… the power of suggestion.

LikeLike

Crazy how that works. I’ve had my husband check my hair three times since starting this post.

LikeLiked by 2 people

LOL

LikeLiked by 1 person

It’s amazing how we almost must have computers to function these days. I was about 30 when we got our first home computer and it was not very friendly! Hopefully, Allie, you will avoid typhoid, dysentery, drowning, starvation, snake bites and nits!

LikeLiked by 1 person

When you set a benchmark for a successful day as low as that, I am bound to have a great day. Thanks!

LikeLiked by 2 people

Ha ha ha ha. Yes, indeed.

LikeLiked by 1 person

Ick. You have the most up-to-date communication systems to tell you the most same-as-it-ever-was news. Everything new tells you something old again. 😉

LikeLiked by 1 person

Kiddo is currently studying animal life cycles which has meant daily pictures in my inbox of their class caterpillar growing. Super special for me!

LikeLiked by 1 person

Drinking Oregon trail 😂every time you go hunting🐂🍸

LikeLike

Now that you mention it, we didn’t have to go hunting once while playing the game… or did we? Hmmm, can’t say I entirely remember.

LikeLiked by 1 person

Is it like modern Oregon trail? Did you just hit McDonald’s otw?

LikeLike

In now, it is just like the original game. We just didn’t pull those cards

LikeLiked by 1 person

Funny stuff. Phones are meant to simplify our lives, but it seems they’re making them more complicated.

LikeLiked by 1 person

There have been so many times I wished we didn’t have the expectation of immediate accessibility

LikeLiked by 1 person

And the feeling of needing to respond to everything immediately.

LikeLiked by 1 person

Yes. That!

LikeLiked by 1 person

We played the Oregon Trail card game so many times on New Years. I may or may not have found a sad trombone sound to use whenever someone died.

LikeLiked by 1 person

Note to self – download sad trombone.

Clearly you are my kind of party gamer.

LikeLiked by 1 person

Didn’t scratch my head, but did give me a great idea for a short story. 😀

LikeLiked by 1 person

What is you secret? A breeze blows a hair and I’m twitchy about it. 🙂

Looking forward to the story!

LikeLiked by 1 person

I’m guessing it’s the way my writing has gone more and more to the dark side. I’ve written about bugs before, but I think they need a comeback.

LikeLiked by 1 person

Yeah, bugs especially the pest kind always seem to come back no matter how many times you think you vanquished them.

LikeLiked by 1 person

They’ll still be here when the human-race is long gone.

LikeLiked by 1 person

Truth. When we finally do find life on another planet, it is most likely going to be of the cockroach variety.

LikeLiked by 1 person

Starship Trooper style. 😀

LikeLiked by 1 person

Exactly.

LikeLiked by 1 person

Loved this post, Allie! It reminded me of another childhood game that my wife and I now play with our four children (all over 40). It’s called Uno, and we play the Attack Uno version that is now sold in stores. It still uses cards, but it has a mechanical device that spits out cards either one at a time, or in multiples, at the push of a button. If you haven’t tried it, I suggest you do. And no amount of wine consumed will prohibit you from playing as well or as poorly as your offspring. Uh oh, forgot to say Uno!! 🙂

LikeLiked by 1 person

Oh, I am very familiar with that one. Lots of fun!

LikeLike

I am very fortunate to have gotten two kids through school (well, one is still a junior) without ever having to worry about lice. Thankfully.

Also: I remember the Oregon Trail game! Fun stuff.

LikeLiked by 1 person

The rain must keep them away 🙂

Wasn’t that such a great game for its time?

LikeLiked by 1 person

I nominated you! http://www.theexcitedwriter.com/blogger-recognition-award/

LikeLiked by 1 person

Thank you so much for the nomination and congratulations on your own!

LikeLiked by 1 person

Excuse me but I did NOT get a fruit candy stuck up a nostril! Ever. What do you think of me? I’ve never heard of Oregon Trail but we had a cpu pretty late and it was large. I know this feeling: “My sons, on the other hand, have never known life before computers small enough to carry in your pocket…” I played Atari’s “Pong”. I recall playing it on a b&w TV.

Okay! AAAHH! I was commenting as I was reading instead of finishing the post first! *&^%$#@!!!!!!!!!!!!! LICE! Frick! I can’t even! Thanks SO MUCH! *shudders*

LikeLiked by 1 person

I’m trying to reply, but all I am doing is laughing. So. Hard.

LikeLiked by 1 person

Blast it all! Bloody hell! That is NOT nice, Allie Potts! Shame on you!

LikeLiked by 1 person

Right. Sorry. Must make amends. Hmmm.

Okay first, you need to play Oregon Trail. How you managed to avoid it baffles me.

Wait, that is the opposite of amends.

Okay, take two – Dang it, still have nothing.

LikeLiked by 1 person

Both kids in middle school, but haven’t been required to download apps to alert me of school news or classroom news yet. We do get email blasts every day though, and my son’s “team” does a thing called “Friday Folders” where everything of importance comes home in a folder on Friday! The lice thing makes me gag. When I volunteer at the school, it’s easy to know if a classroom is affected by a lice outbreak because backpacks and coats would be kept in the hallways in plastic bags. Apparantly, lice can be suffocated.

LikeLiked by 1 person

That’s really good to know about the plastic bag trick.

We also have a weekly folder that comes home with his past week’s in-class work and progress reports, so there is still some old-fashioned communication as well. His teacher this year seems to be a particular advocate of going paperless though as most of his homework assignments are online as well.

LikeLiked by 1 person

EWWWWWWWWWWW. God, I remember it well in my school, being a girl, with waist length hair back then, I got it. SEVERAL times. *weeps* at least with boys you can shave their hair off!

LikeLiked by 1 person

I’ve had them myself and they are such a pain. Guys truly do not know how lucky they are to get away with the head shave.

LikeLiked by 1 person